There were a few typos and other problems in the paper that was posted on Tuesday. The ones that I noticed have been corrected, resulting in a new version of the paper. The original version is preserved at:

http://dl.dropbox.com/u/24876056/CIS565/Old/Paper.pdf

This blog covers the implementation of my final project for the GPU programming and architecture class at the University of Pennsylvania. The aim of the project is to create a deferred renderer that uses screen space classification to determine whether lighting or a post-processing effect need be applied to a certain tile in screen space.

Thursday, April 28, 2011

Tuesday, April 26, 2011

Final Project Deliverables

The project is complete, links to the deliverables follow:

Tuesday, April 19, 2011

Per-object Motion Blur

Today, I was able to add per-object screen-space motion blur. Basically, an object is flagged if it is "animated" (I use the term loosely here because animation in my project just covers a static object moving in a circular path). A screen-space velocity is calculated by comparing the position using the model matrix for the current frame with the position calculated using the model matrix from the previous frame. Changing the view does not actually add any motion-blur. Then, I used the technique in 27.3 of GPU Gems 3, to actually perform the motion blur. As an additional step, the shader also checks the object ID of the sampled pixel to determine whether it belongs to the same object. This way, the blurring effect avoids weird discontinuities that would arise from sampling the non-moving background objects.

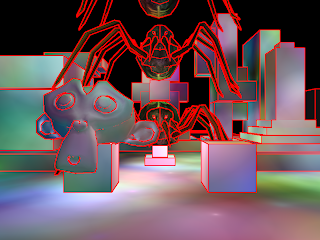

One limitation of this method is that the outlines of the objects aren't blurred. Thus, the effect doesn't look exactly right because the outline of the object is still sharply defined. Posted below are two sample images. The top has motion blur enabled, while the bottom picture doesn't. Only one of the spiders and the monkey head are "animated".

One limitation of this method is that the outlines of the objects aren't blurred. Thus, the effect doesn't look exactly right because the outline of the object is still sharply defined. Posted below are two sample images. The top has motion blur enabled, while the bottom picture doesn't. Only one of the spiders and the monkey head are "animated".

Need glasses?

I was able to implement a first-pass attempt at edge blurring today. The idea is that this can approximate some sort real anti-aliasing. While I am aware that there are much better methods for anti-aliasing in a deferred shader, I am just looking to do something rather simple. Anyway, I used the following link as a inspiration for my edge detection shader: forum post. However, my actual shader for edge detection looks very different; I just briefly looked at the post.

The previous picture shows my edge detector in action. One sort of obvious problem is that some of the lines appear to be thicker than they should be. This will result in more pixels getting blurred than necessary. When I generated the edges, I also generated a horizontal and vertical blur texture. My hope was that I could combine a weighting of these two blurs to achieve a general blur and motion directed blurs. I'm convinced that such a method won't work anymore. I think I will just have to use weighted box blurs for both. A side-by-side comparison of the edge-blur effect with an unblurred image follows:

If you can't tell, the unblurred image is on the right, and the blurred image is on the left. To me, instead of making it appear that there edges are less aliased, the effect of the edge blur is to make the whole image look blurry (hence the title of this post). There is some anti-aliasing effect taking place; for instance, look at the legs of the spider on either side of the image. The whole image might look less blurry if every surface was textured. I guess that I should check the filter with the sponza scene before I discount edge-blurring entirely.

The previous picture shows my edge detector in action. One sort of obvious problem is that some of the lines appear to be thicker than they should be. This will result in more pixels getting blurred than necessary. When I generated the edges, I also generated a horizontal and vertical blur texture. My hope was that I could combine a weighting of these two blurs to achieve a general blur and motion directed blurs. I'm convinced that such a method won't work anymore. I think I will just have to use weighted box blurs for both. A side-by-side comparison of the edge-blur effect with an unblurred image follows:

If you can't tell, the unblurred image is on the right, and the blurred image is on the left. To me, instead of making it appear that there edges are less aliased, the effect of the edge blur is to make the whole image look blurry (hence the title of this post). There is some anti-aliasing effect taking place; for instance, look at the legs of the spider on either side of the image. The whole image might look less blurry if every surface was textured. I guess that I should check the filter with the sponza scene before I discount edge-blurring entirely.

Friday, April 15, 2011

Preliminary Results

After working nearly non-stop for the past couple of days, I finally finished a first-pass attempt at performing the point light calculations in CUDA. This took a lot longer than it probably should have due to lingering bit-packing issues, unfamiliarity with CUDA/OpenGL interop, and the fact that I didn't realize at first that you can't write to a texture in a CUDA kernel. The good news is that the output is nearly identical to both the forward and regular deferred rendering path. The bad news is that the CUDA kernel is slow. For instance, using the default scene with 1024 points lights, I get the following results (quantities are FPS):

Which is pretty discouraging. I still need to implement post-processing effects, so I'm going to hold off on trying to tune the kernel for now. The code is posted below, if anyone has any suggestions though.

| CUDA | Deferred | Forward |

|---|---|---|

| 17.80 | 57.09 | 9.82 |

Which is pretty discouraging. I still need to implement post-processing effects, so I'm going to hold off on trying to tune the kernel for now. The code is posted below, if anyone has any suggestions though.

__global__ void cudaCalculation41(unsigned int numPointLights, float *plFloats, unsigned int *srBounds,

float *invPersp, float *view, int width, int height, uchar4 *outData) {

__shared__ float iP[16];

__shared__ float v[16];

__shared__ bool tileInRect[MAX_BLOCK_SIZE];

__shared__ float pLightInfo[8];

int tdx = threadIdx.x + blockDim.x*threadIdx.y;

int blockS = blockDim.x * blockDim.y;

// Load the inverse perspective and view matrices

if(blockS < 32) {

if(tdx < 16) {

iP[tdx] = invPersp[tdx];

v[tdx] = view[tdx];

}

}

else {

if(tdx < 16) {

iP[tdx] = invPersp[tdx];

}

else if(tdx < 32) {

v[tdx - 16] = view[tdx - 16];

}

}

__syncthreads();

// Calculate texture read coordinates

ushort2 coords;

coords.x = blockDim.x * blockIdx.x + threadIdx.x;

coords.y = blockDim.y * blockIdx.y + threadIdx.y;

// Get the depth and VS position

uchar4 txRead = tex2D(depthTexRef, coords.x, coords.y);

unsigned int depthUInt = txRead.x + txRead.y*256 + txRead.z*256*256 + txRead.w*256*256*256;

float depth = __int_as_float(depthUInt);

float3 vpos = vsPosition(depth, (float)coords.x/width, (float)coords.y/height, iP);

// Get the normal

txRead = tex2D(normalTexRef, coords.x, coords.y);

float n1 = txRead.x/(256.0f*255.0f) + txRead.y/255.0f;

float n2 = txRead.z/(256.0f*255.0f) + txRead.w/255.0f;

n1 = n1*4.0f - 2.0f;

n2 = n2*4.0f - 2.0f;

float f = n1*n1 + n2*n2;

float g = sqrt(1.0f - .25f*f);

float3 normal = make_float3(n1*g, n2*g, 1.0f - .5f*f);

// Get the tile rect-bounds

ushort4 pBounds;

pBounds.x = blockIdx.x * blockDim.x;

pBounds.y = blockIdx.y * blockDim.y;

pBounds.z = pBounds.x + blockDim.x - 1;

pBounds.w = pBounds.y + blockDim.y - 1;

// Initialize color accumulation buffer

float3 color_accum = make_float3(0,0,0);

bool onScreen = (coords.x < width) && (coords.y < height);

uchar4 diffuse;

if(onScreen) {

diffuse = tex2D(diffuseTexRef, coords.x, coords.y);

}

unsigned int numLoops = numPointLights / blockS + (numPointLights % blockS == 0 ? 0 : 1);

// Loop through for all of the lights

for(unsigned int i = 0; i < numLoops; ++i) {

int index = i*blockS + tdx;

// Check to see if a light is in bounds

if(index < numPointLights) {

tileInRect[tdx] = !((srBounds[index] > pBounds.z) ||

(srBounds[index + numPointLights] > pBounds.w) ||

(srBounds[index + 2*numPointLights] < pBounds.x) ||

(srBounds[index + 3*numPointLights] < pBounds.y));

}

else

tileInRect[tdx] = false;

__syncthreads();

// For each light that was in bounds, calculate the light contribution

for(unsigned int j = 0; j < blockS; ++j) {

if(tileInRect[j]) {

// Load the info for a single light into shared memory

if(tdx < 8) {

pLightInfo[tdx] = plFloats[(i*blockS+j)*8 + tdx];

}

__syncthreads();

// Only perform light calculation if the thread corresponds to an on-screen coordinate

if(onScreen) {

float3 lpos = mMult3(v, make_float4(pLightInfo[0], pLightInfo[1], pLightInfo[2], 1.0f));

float d = dist(lpos, vpos);

float attenuate = 1.0f;

if(d > pLightInfo[6])

attenuate = .0f;

else if(d > pLightInfo[7])

attenuate -= (d - pLightInfo[7])/(pLightInfo[6] - pLightInfo[7]);

// N*L*attenuation

float3 lDir = normalize(make_float3(lpos.x - vpos.x, lpos.y - vpos.y, lpos.z - vpos.z));

float factor = dMax(dot(lDir,normal), 0.0f)*attenuate;

// Accumulate the color contribution from the current light

color_accum.x += factor*pLightInfo[3];

color_accum.y += factor*pLightInfo[4];

color_accum.z += factor*pLightInfo[5];

}

__syncthreads();

}

}

}

// Multiply light accumulation by the texture color

if(onScreen)

outData[coords.x + width*coords.y] = make_uchar4(pointFactor*color_accum.x*diffuse.x,

pointFactor*color_accum.y*diffuse.y,

pointFactor*color_accum.z*diffuse.z, 255);

}

Bit-Packing: Depth and Normals

When I discovered that CUDA wouldn't let me register a texture that was of the GL_DEPTH_COMPONENT type, I decided that I needed to write the values of depth into a texture in my g-buffer shader. Also, I had been neglected the task of actually packing the 16-bit normal components into two different channels of an 8-bit RGBA texture. After some searching, I can across the following code for "packing" 16- or 32-bit information into 8-bit channels.

The problem with this is that it doesn't actually preserve the identical bit information from the 32-bit float. Some amount of precision is lost, which leads to an obvious difference between forward and deferred rendering as shown below. The picture on the left is forward, and the picture on the right is deferred.

If you look at the white spot of light in the center of each region, you can see that the one on the left is less sharp, indicating that the associated light source might have been further away.

GLSL 4.0 (and earlier versions with the proper extensions defined) supports direct manipulation of the bits and convenient functions for packing/unpacking floats into integer formats. This allows you to preserve perfect bit information. Unfortunately, I couldn't figure out how to read/output texture data as anything but normalized floating point numbers, even when I requested an internal type of unsigned int. I'm assuming that it is possible to do otherwise, I just couldn't figure out how. Anyway, I was able to develop packing/unpacking methods that seem to do a perfect bit pack/unpack, but I'm a little wary due to the (forced) conversion to normalized floats. The code I ended up using is:

The only problem with this is that it is a bit slower than the less accurate methods posted above. Initially, I thought that they were much slower, but apparently I changed the lighting calculations somewhere between my 3.3 version shaders and my 4.1 version shaders. If anyone comes across this blog and has a more efficient way of packing/unpacking 32- and 16-bit floats into 8-bit RGBA channels, please leave something in the comments.

// Packing and unpacking code courtesy of:

// http://www.ozone3d.net/blogs/lab/20080604/glsl-float-to-rgba8-encoder/

// http://olivers.posterous.com/linear-depth-in-glsl-for-real

vec4 packFloatTo4x8(in float val) {

const vec4 bitSh = vec4(256.0f*256.0f*256.0f, 256.0f*256.0f, 256.0f, 1.0f);

const vec4 bitMsk = vec4(0.0f, 1.0f/256.0f, 1.0f/256.0f, 1.0f/256.0f);

vec4 result = fract(val * bitSh);

result -= result.xxyz * bitMsk;

return result;

}

vec4 pack2FloatTo4x8(in vec2 val) {

const vec2 bitSh = vec2(256.0f, 1.0f);

const vec2 bitMsk = vec2(0.0f, 1.0f/256.0f);

vec2 res1 = fract(val.x * bitSh);

res1 -= res1.xx * bitMsk;

vec2 res2 = fract(val.y * bitSh);

res2 -= res2.xx * bitMsk;

return vec4(res1.x,res1.y,res2.x,res2.y);

}

float unpack4x8ToFloat(in vec4 val) {

const vec4 unshift = vec4(1.0f/(256.0f*256.0f*256.0f), 1.0f/(256.0f*256.0f), 1.0f/256.0f, 1.0f);

return dot(val, unshift);

}

vec2 unpack4x8To2Float(in vec4 val) {

const vec2 unshift = vec2(1.0f/256.0f, 1.0f);

return vec2(dot(val.xy, unshift), dot(val.zw, unshift));

}

The problem with this is that it doesn't actually preserve the identical bit information from the 32-bit float. Some amount of precision is lost, which leads to an obvious difference between forward and deferred rendering as shown below. The picture on the left is forward, and the picture on the right is deferred.

If you look at the white spot of light in the center of each region, you can see that the one on the left is less sharp, indicating that the associated light source might have been further away.

GLSL 4.0 (and earlier versions with the proper extensions defined) supports direct manipulation of the bits and convenient functions for packing/unpacking floats into integer formats. This allows you to preserve perfect bit information. Unfortunately, I couldn't figure out how to read/output texture data as anything but normalized floating point numbers, even when I requested an internal type of unsigned int. I'm assuming that it is possible to do otherwise, I just couldn't figure out how. Anyway, I was able to develop packing/unpacking methods that seem to do a perfect bit pack/unpack, but I'm a little wary due to the (forced) conversion to normalized floats. The code I ended up using is:

vec4 packFloatTo4x8(in float val) {

uint a = floatBitsToInt(val);

return vec4((bitfieldExtract(a,0,8))/256.0f,

(bitfieldExtract(a,8,8))/256.0f,

(bitfieldExtract(a,16,8))/256.0f,

(bitfieldExtract(a,24,8))/256.0f);

}

float unpack4x8ToFloat(in vec4 val) {

uint a = uint(val.x*256.0f+.5f) +

uint(val.y*256.0f+.5f)*256u +

uint(val.z*256.0f+.5f)*256u*256u+

uint(val.w*256.0f+.5f)*256u*256u*256u;

return uintBitsToFloat(a);

}

vec4 pack2FloatTo4x8(in vec2 val) {

uint a = packUnorm2x16(val);

return vec4((bitfieldExtract(a,0,8))/256.0f,

(bitfieldExtract(a,8,8))/256.0f,

(bitfieldExtract(a,16,8))/256.0f,

(bitfieldExtract(a,24,8))/256.0f);

}

vec2 unpack4x8To2Float(in vec4 val) {

uint a = uint(val.x*256.0f+.5f) +

uint(val.y*256.0f+.5f)*256u +

uint(val.z*256.0f+.5f)*256u*256u+

uint(val.w*256.0f+.5f)*256u*256u*256u;

return unpackUnorm2x16(a);

}

The only problem with this is that it is a bit slower than the less accurate methods posted above. Initially, I thought that they were much slower, but apparently I changed the lighting calculations somewhere between my 3.3 version shaders and my 4.1 version shaders. If anyone comes across this blog and has a more efficient way of packing/unpacking 32- and 16-bit floats into 8-bit RGBA channels, please leave something in the comments.

Tuesday, April 12, 2011

16-bit color accumulation target and scissor rects

Rendering point lights onto flat surfaces with no texturing leads to annoying quantization rings that are very visible when only using 8-bits per color. This problem is even worse when two point lights cover the same area, which makes a diamond shaped pattern as in the included screenshot:

In order to help combat this, I changed the forward and basic deferred rendering path to perform the color accumulation from lighting into a 16-bit per channel texture. While this doesn't get rid of the ring effect from a single point light, it does fix the diamond pattern when two point lights intersect. Also, rendering into a texture is better anyway because it is what I will need to do when I start working on post processing effects; plus, if I want to actually perform some sort of HDR scaling to the accumulated colors, this will make it easy to do so.

I also implemented a seemingly correct scissoring rectangle calculation for point lights. It speeds up the deferred shading render by a significant amount depending on the scene and the size of the point lights. On the simple scene (spiders + monkey head + cuboids), the deferred shader is twice as fast as the forward renderer with moderate sized point lights. As the point lights get smaller, this gap in performance increases, with the forward render staying constant and the deferred renderer getting faster. I've attached a picture showing giving a rough view of the scissoring rectangles:

The next step will be to write the tile-based light calculation using CUDA.

In order to help combat this, I changed the forward and basic deferred rendering path to perform the color accumulation from lighting into a 16-bit per channel texture. While this doesn't get rid of the ring effect from a single point light, it does fix the diamond pattern when two point lights intersect. Also, rendering into a texture is better anyway because it is what I will need to do when I start working on post processing effects; plus, if I want to actually perform some sort of HDR scaling to the accumulated colors, this will make it easy to do so.

I also implemented a seemingly correct scissoring rectangle calculation for point lights. It speeds up the deferred shading render by a significant amount depending on the scene and the size of the point lights. On the simple scene (spiders + monkey head + cuboids), the deferred shader is twice as fast as the forward renderer with moderate sized point lights. As the point lights get smaller, this gap in performance increases, with the forward render staying constant and the deferred renderer getting faster. I've attached a picture showing giving a rough view of the scissoring rectangles:

The next step will be to write the tile-based light calculation using CUDA.

Monday, April 11, 2011

Regular Deferred Shading (Partially) Complete

I have the deferred shading implementation mostly complete for the non-tiled approach. The thing that I lacking is calculating screen space quads ("scissor rectangles") that determine the maximum bounds of effect for a given light. I spent a few hours trying to get it working for spot lights last night, but didn't have any luck with the math when the light originates behind the view plane. I am changing the point lights in my project so that they have a limited sphere of influence.

The forward and deferred paths lead to completely identical images, but the deferred path is currently slower due to the fact that a full-screen quad is being rendered for each spot and point light. That requires too many texture reads for deferred shading to surpass forward shading without significantly increasing the complexity of the scene geometry.

Below is a screen of the deferred shading output for the "sponza" scene available from Crytek: http://www.crytek.com/cryengine/cryengine3/downloads

I also need to give an in-class presentation today on the status of my project. To that end I have made a powerpoint, which is available at: Dropbox link.

The next thing that I need to work on is calculating the scissor rectangles for point lights. I found several sources on how to do this for point lights, so I am going to focus on getting point lights working first, then come back to spot lights at the end of the project if I still have time. Next, I will work on the tile-based shading calculations. Finally, I will add the post-processing effects and create a tile-based solution for them as well.

The forward and deferred paths lead to completely identical images, but the deferred path is currently slower due to the fact that a full-screen quad is being rendered for each spot and point light. That requires too many texture reads for deferred shading to surpass forward shading without significantly increasing the complexity of the scene geometry.

Below is a screen of the deferred shading output for the "sponza" scene available from Crytek: http://www.crytek.com/cryengine/cryengine3/downloads

I also need to give an in-class presentation today on the status of my project. To that end I have made a powerpoint, which is available at: Dropbox link.

The next thing that I need to work on is calculating the scissor rectangles for point lights. I found several sources on how to do this for point lights, so I am going to focus on getting point lights working first, then come back to spot lights at the end of the project if I still have time. Next, I will work on the tile-based shading calculations. Finally, I will add the post-processing effects and create a tile-based solution for them as well.

Tuesday, April 5, 2011

Model Loading Complete

I've finally got the model loading as complete as it needs to be for the purposes of this project. As I found out after writing a lot of the code myself, ASSIMP contains options for simplifying loaded scenes into flat structures (one node, with a bunch of pre-transformed meshes). The ASSIMP implementation tends to generate some errors on the COLLADA models that I've plugged in from Google's 3d warehouse. My implementation, on the other hand, was orders of magnitude slower; I decided to scrap it in favor of reasonable speed and just use model files that don't cause the importer to mess up.

Here is a sample image using some random ikea furniture and a bizarre amount of spiders, which shows textures working. Due to perceived time constraints on getting this project finished, I'm not going to do anything fancier than just using diffuse color textures. Plus, none of the models that I've come across include textures for bump- or normal-mapping.

Because some models have textures and others don't, I experimented with using preprocessor defines in the shaders to help me generate two different shaders. While this wasn't really needed for such a simple case, I wanted to make sure that it worked as expected for the when I have to make 16 or 32 shader combinations.

I hope to set up the lighting system and non-tiled deferred rendering path by class on Monday so that I have something to present. Even if I get that far it doesn't even seem like half of the coding portion of the project would even be done; there is still a ton to do, and I hope I can finish it all in three weeks.

Here is a sample image using some random ikea furniture and a bizarre amount of spiders, which shows textures working. Due to perceived time constraints on getting this project finished, I'm not going to do anything fancier than just using diffuse color textures. Plus, none of the models that I've come across include textures for bump- or normal-mapping.

Because some models have textures and others don't, I experimented with using preprocessor defines in the shaders to help me generate two different shaders. While this wasn't really needed for such a simple case, I wanted to make sure that it worked as expected for the when I have to make 16 or 32 shader combinations.

I hope to set up the lighting system and non-tiled deferred rendering path by class on Monday so that I have something to present. Even if I get that far it doesn't even seem like half of the coding portion of the project would even be done; there is still a ton to do, and I hope I can finish it all in three weeks.

Saturday, April 2, 2011

Model Loader Added

After much searching and rejecting a few candidates that didn't integrate well with what I was trying to do, I finally found a model file parser that is suitable for my project. The loader is called "Assimp: Open Asset Import Library," and can be found at: http://assimp.sourceforge.net/. I've included a screenshot showing the street scene from our 3rd homework assignment with a spider model added to it. The image was generated using a simple shader that just outputs the view-space normals as a color. The spider model was included in the assimp download package.

One neat thing about assimp is that it can import a huge variety of file formats, which should allow me to find some interesting geometry to use in my project. As of now, the program only uses the position and normal data to build objects, but I plan to also include support for different materials, textures, and vertex color data for untextured models. I'm not a huge fan of the included model because it includes a split down the center of the face.

My next step will be to implement some kind of singleton objects for managing shader and texture pools and editing the forward renderer to support an arbitrary number of lights (through a multi-pass approach). I also need to set up a system to include simple animation in the scene (probably entire objects moving in circular paths). Once those tasks have been completed, I can finally begin working on the tile-based deferred rendering.

The plan is to implement tiling for both lighting and post-processing. For the lighting stage, the entire light calculation will probably be done in a CUDA kernel. I was looking at an implementation for tile-based lighting in directX (available here), and noticed that it relied entirely on the directX computer shader. Since openGL doesn't yet support anything similar as far as I have been able to tell, it will be necessary to use CUDA instead. For the post-processing, my approach will be somewhat different. The tile classification will still occur in CUDA; however, rendering to the framebuffer will be done by a series of calls to all of the possible shader combinations. Using 4 or 5 post-processing effects will require 16 or 32 shaders to account for all combinations; the shaders will be permuted from an uber-shader using preprocessor defines.

Friday, March 25, 2011

Open Sourced

My final project will be open sourced and hosted at:

http://code.google.com/p/cis565-deferred-shading-project/

As of now, there is hardly any of my code in the repository. For convenience, I plan on using the following libraries, which I have been accustomed to using on the homework assignments: freeglut, glew, glm, and SOIL.

In preparation for my presentation on deferred shading, I have been reading quite a few papers and articles on deferred shading. This has given me a better idea of how I want to set up my g-buffer for the targeted effects. With the current plan, four 32-bit frame buffers will be used, arranged as follows:

http://code.google.com/p/cis565-deferred-shading-project/

As of now, there is hardly any of my code in the repository. For convenience, I plan on using the following libraries, which I have been accustomed to using on the homework assignments: freeglut, glew, glm, and SOIL.

In preparation for my presentation on deferred shading, I have been reading quite a few papers and articles on deferred shading. This has given me a better idea of how I want to set up my g-buffer for the targeted effects. With the current plan, four 32-bit frame buffers will be used, arranged as follows:

- 0 - diffuse.rgb + materialID

- 1 - normal.xy (16 bits each)

- 2 - depth (24 bits) + flags

- 3 - screenSpaceVelocity.xy + objectID

One bit of the flag value will represent the sign of the z component of the normal. However, the storage scheme for the normals will probably be changed. Patrick sent me a very good link about different compression methods that can be used for the normals: http://aras-p.info/texts/CompactNormalStorage.html

Wednesday, March 23, 2011

First Post

The proposal for my project can be found at: http://sites.google.com/site/smt565files/ProjectProposal.pdf

My first step is going to be to figure out what I am going to use for scene geometry. Because my aim is to include motion-blur as a post-processing effect, I need to include animation of some sort. My original thought was to make a simple cubic room with some crudely animated models (i.e., the whole model moves as one unit) flying around inside of it. I didn't plan on using any sort of scene graph, rather each object would have a single world space transform associated with it. The animation component would just be circular paths and rotations around the center of each object. Unfortunately, I haven't come across a suitable library for loading 3d model information.

Now, I am hoping to use simple 3d primitives such as cubes and spheres. I envision a scene full of bouncing balls and light-casting, rotating, stalactites coming down from the ceiling. Seeing as how the project is about a rendering process, I don't think the scene geometry should be very consequential. However, the envisioned scene should provide enough movement and edges for my purposes.

As mentioned in my proposal, the inspiration for the screen-space classification for lighting and post processing comes from the following two sources:

My first step is going to be to figure out what I am going to use for scene geometry. Because my aim is to include motion-blur as a post-processing effect, I need to include animation of some sort. My original thought was to make a simple cubic room with some crudely animated models (i.e., the whole model moves as one unit) flying around inside of it. I didn't plan on using any sort of scene graph, rather each object would have a single world space transform associated with it. The animation component would just be circular paths and rotations around the center of each object. Unfortunately, I haven't come across a suitable library for loading 3d model information.

Now, I am hoping to use simple 3d primitives such as cubes and spheres. I envision a scene full of bouncing balls and light-casting, rotating, stalactites coming down from the ceiling. Seeing as how the project is about a rendering process, I don't think the scene geometry should be very consequential. However, the envisioned scene should provide enough movement and edges for my purposes.

As mentioned in my proposal, the inspiration for the screen-space classification for lighting and post processing comes from the following two sources:

- Hutchinson et al, "Screen Space Classification for Efficient Deferred Shading"

- Johan Andersson, "Parallel Graphics in Frostbite - Current and Future"

At some point I am going to open-source the project code. I'm still not sure whether I am going to use a model loader and what license it would be subject to, so I am going to hold off on doing so for the time being.

Subscribe to:

Posts (Atom)